The Age of Artificial Ignorance

[Parenting series] If We’re Not Careful, AI Is Rewiring Our Minds, Making Attention Scarce and Thinking Optional

[Panel sharing at the event Parenting in the AI Age on March 20, 2026]

AI is rapidly becoming one of the most powerful general‑purpose technologies humanity has ever built, reshaping how we consume information, entertain ourselves and relate to one another. It offers phenomenal benefits, but it also stress tests our minds. If we are not careful, AI will not just make information abundant; it will make attention scarce and thinking optional. That is how we drift into artificial ignorance: a state in which powerful tools do so much of the visible thinking that we still look intelligent on the surface, while the underlying muscles of attention, memory and judgment quietly atrophy. This is the real risk facing our children, and those raising them. The question is not only whether AI will grow more intelligent, but whether we will allow ourselves to grow less so.

1. Innovation outruns adaptation

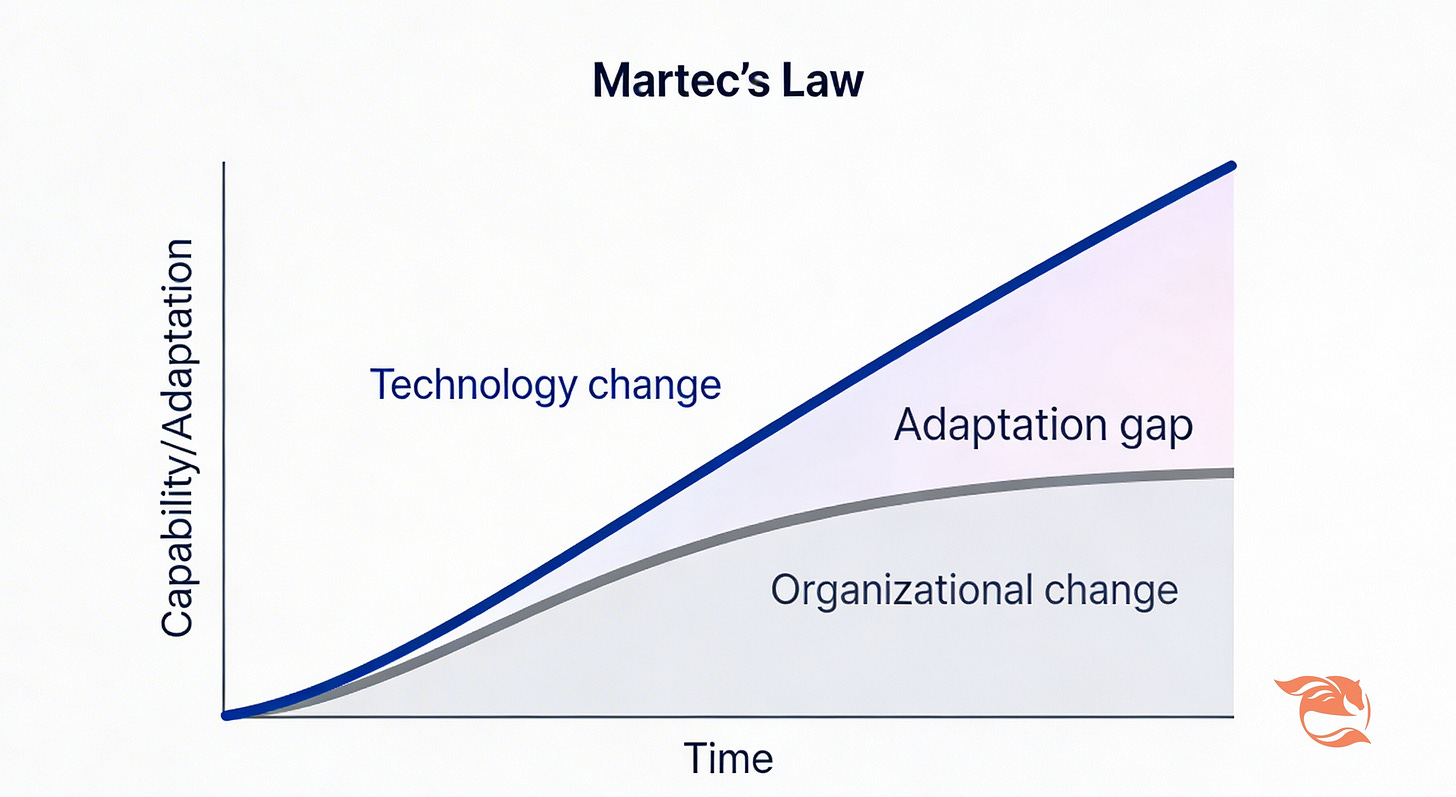

For the first time, the rate of innovation feels consistently faster than the rate of adaptation. We barely absorbed GPT‑3 in 2022 before more capable models landed in 2023 and 2024. Agentic systems now act as digital staff, planning and coordinating quietly in the background.

The curve of technological change has risen above the curve of human or organizational adaptation. Scott Brinker’s Martec’s Law puts it more formally: technology changes exponentially, organisations change logarithmically1 [1].

The gap between those curves is where parents and educators now live. Children inhabit a world of ambient, on‑demand intelligence; adults are still updating policies and habits designed for a slower era. Nowhere is this gap more visible than in how we consume information and spend attention.

2. Information overload with synthetic content

Analysts now warn that we are racing toward a world where much, if not most, online content is synthetic. A Europol‑linked briefing once estimated that “as much as 90% of online content may be synthetically generated by 2026”2 [2]. The precise number may be contested, but the direction is not. A growing share of what scrolls past our children’s eyes synthetic content spun out by machines.

AI tools generate, translate and recombine text, images, audio and video at negligible marginal cost. Studies of synthetic media on platforms like X show spikes in AI‑generated images and videos after each major model release, including viral deepfakes of public figures3 [3]. Misinformation researchers now treat AI‑generated content as a central risk to the integrity of our information environment4 [4].

It is not just about opening a floodgate of information. That flood now also contains:

more hallucinated facts — confidently wrong answers that sound right,

more false news and deepfakes, and

less ability to tell who — or what — actually created what we see.

For a teenager trying to understand the world, signal and noise are becoming harder to distinguish. This is classic information overload, amplified by synthetic media. In response, a cottage industry of “AI detectors” has sprung up. The problem is structural: generators improve continuously; detectors are always one step behind. Europol’s analysis warns that as synthetic media proliferates, technical detection alone will be insufficient; human judgment, contextual verification and more old-fashioned attention will be essential. In other words, the real defence has to live in our minds [2].

That means cultivating a different way of reading and watching:

Ask high‑quality, grounded questions with enough context. AI systems are pattern‑matchers, not oracles. The more specific your question and situation, the easier it is to see when an answer “sounds right” but clashes with basic facts or lived experience.

Pre‑empt your own confirmation bias. AI is far too willing to agree and flatter. Before you ask, ask yourself: What evidence would change my mind? Otherwise, you risk using smart tools to dig yourself an even deeper intellectual trench.

Practice critical, balanced thinking. Check sources, compare perspectives and stay alert to gaslighting, missing context and plausible nonsense dressed up as authority.

These are the cognitive habits that turn AI from a hallucination machine into a thinking aid. They are also habits that children can learn — but only if adults model them.

3. How are we using AI now?

Millions of people now use AI every day. Understanding people’s interactions with AI is one of the great sociological questions of our time. Anthropic, creator of Claude.ai, recently designed a privacy-preserved tool, Anthropic Interviewer, to asks people directly (detailed interviews at unprecedented scale) to get a comprehensive picture of AI’s changing role in people’s lives, including how people are actually using Claude’s output and how do they feel about it. This is a new step in understanding the wants and needs of our users, as well as gathering data for the analysis of AI’s societal and economic impacts5 [5].

Key Usage Trends from Anthropic Interview research results, the Anthropic Economic Index and the AI Fluency Index (late 2025 / early 2026):

Dominant Uses: Usage is concentrated, with over one-third (36%) of Claude.ai conversations focusing on software development and coding, although educational and scientific tasks are rising.

Automation vs. Augmentation: While AI agents have spurred an increase in automation (direct task delegation), a significant portion of users still prefer “augmentation”—using AI as a collaborative, interactive, and iterative thought partner.

Agentic Feature Shift Over Time: By November 2025, 52% of interactions were classified as augmented, while 45% were automated, showing a shift back toward collaboration as more “agentic” (proactive) features were introduced.

“Artifacts” Impact: When using the “Artifacts” feature (for creating documents, code, or apps), users tend to be less critical, questioning the AI’s reasoning 3.1 percentage points less often than in standard chat, suggesting higher trust in polished-looking outputs.

The trend towards agentic use cases is accelerating. It would be important to take a pause to understand what AI is doing to our brains and what skills would be required to properly leverage AI in amplifying human.

4. What AI is doing to our brains: cognitive offloading and deskilling

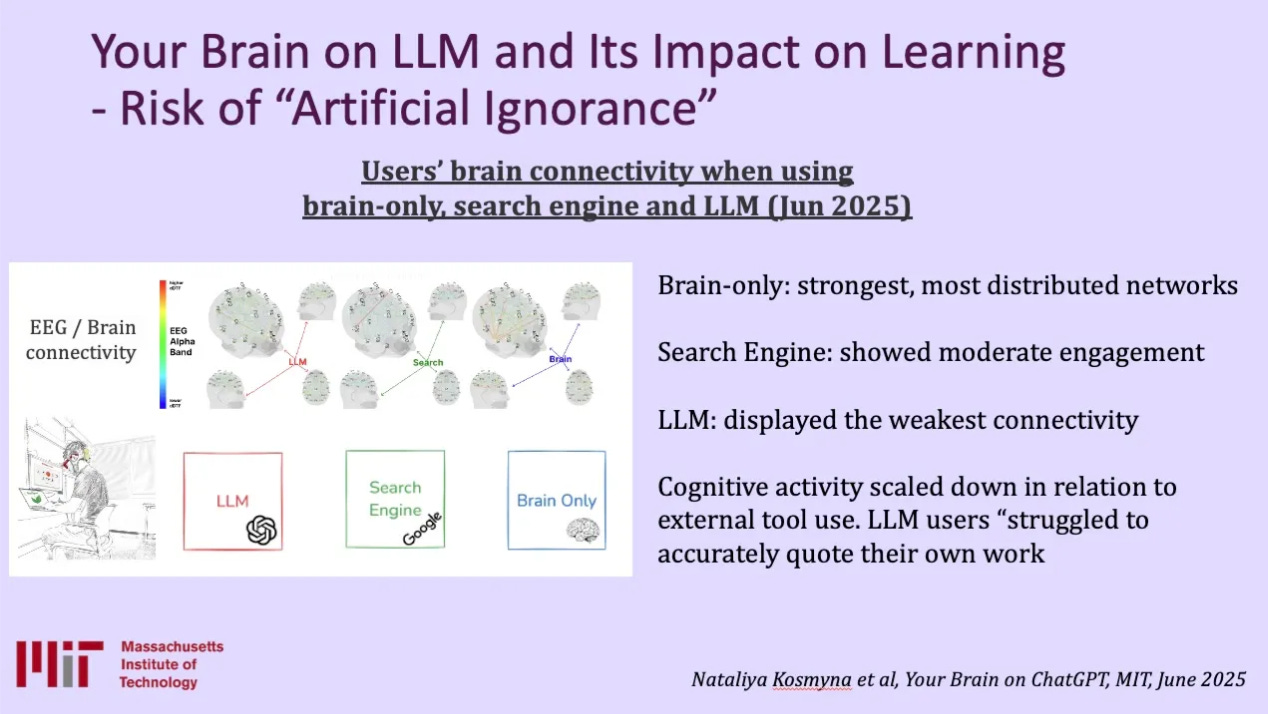

There is also a quieter, neurological risk: what happens when we lean on AI too much. And even experts are not immune. But let’s examine the baseline first, as illustrated in a recent MIT Media Lab study led by Nataliya Kosmyna, volunteers wore EEG‑like headsets while writing short essays and taking math tests under three conditions: using only their own brains, using a search engine and using ChatGPT as a co‑pilot6 [6]. The results were telling:

In the brain‑only condition, participants showed the richest, most distributed brain connectivity, especially in regions linked to attention, planning and memory.

With search engine assistance, connectivity dropped.

With ChatGPT co-pilot, connectivity dropped roughly halved on some measures compared with the brain‑only baseline. Participants in the heavy‑AI condition were also unable to remember clearly what they had written later.

A single practice of using AI does not necessarily shrink our brains. But over time, if we rely on AI tools every day for years, we repeatedly offload effortful thinking to AI. In other words, we are not just using a tool, we are training ourselves not to think. That process of cognitive offloading is artificial ignorance in its purest form: high apparent output, low genuine engagement.

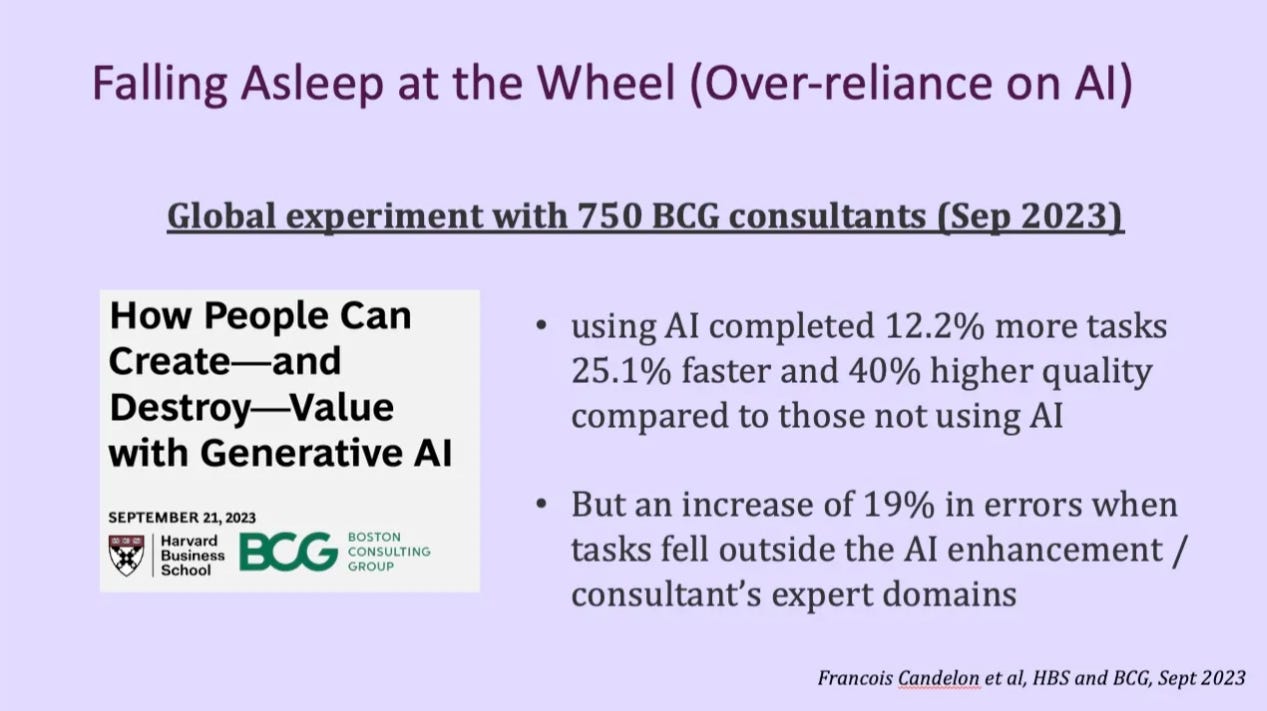

The more we lean on AI, the easier it becomes to let judgment idle, even in domains where we are supposed to be the experts. In the Harvard–Boston Consulting Group “jagged technological frontier” experiment, hundreds of BCG consultants were assigned to solve realistic business problems with and without GPT‑4. When they used AI on tasks inside their domain — say, telecom specialists on telecom cases — they completed 12.2% more tasks, 25.1% faster, and with 40% higher quality compared to those not using AI. But when put the same specialists on tasks outside that frontier, performance fell. Error rates rose by 19%, and consultants began relaying AI’s confident but wrong recommendations instead of interrogating them. Over‑reliance turned experts into novices, like a driver falling asleep at the wheel with cruise control on: safe on straight highways, dangerous on sharp bends7 [7].

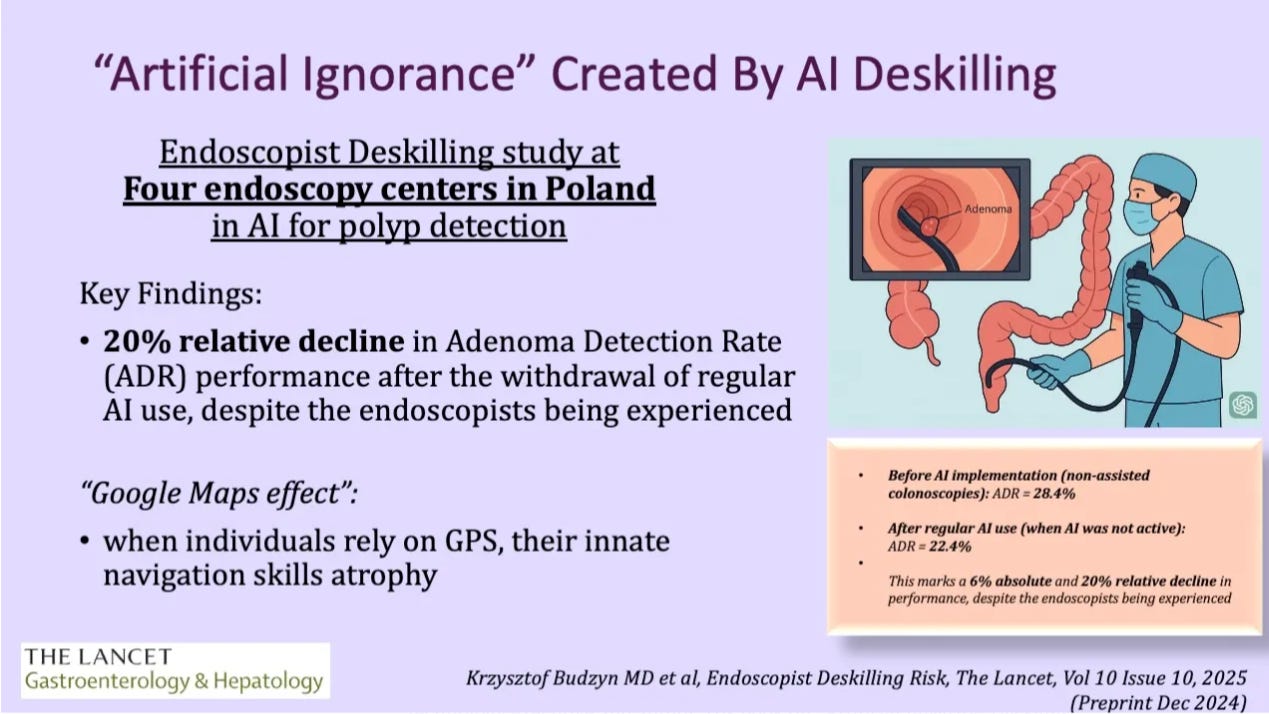

Deskilling also appears in the very domains where experts are strongest. A study in The Lancet Gastroenterology & Hepatology followed endoscopists after they introduced AI systems to assist with polyp detection during colonoscopy. AI‑assisted procedures improved detection in the moment, but several months later, in unassisted procedures, the doctors’ own adenoma detection rate appeared to fall by about 20%, suggesting a deskilling effect. AI sharpened the tool but dulled the surgeon8 [8].

The remedy is not to abandon AI, but to build in “AI holidays” — regular AI‑free practice that keeps human skills alive even as machines assist [8]. If this is what over‑reliance can do to expert cognition and performance, it is not hard to imagine what happens when still‑forming minds of the children lean on AI for more and more of their thinking. The deepest risk is that children never fully develop the habits of attention and effort that deep thinking requires.

5. AI-amplified attention casino, loneliness, anxiety and mental health exacerbation

Now move from cognition to attention. When AI is implemented in social media and smartphones, it further fragments our focus by supercharging personalised feeds and content generation. Welcome to the attention casino.

Psychologist Professor Angela Duckworth notes a worrying pattern: where students once stayed with a task for around three minutes before switching, the rise of short‑form, highly curated feeds seems to have cut this to well under a minute. The exact “45 seconds” figure is not a law of nature, but the direction is clear: attention slices are getting thinner9 [9].

You cannot build deep expertise — or deep relationships — 45 seconds at a time.

This is not simply about willpower. Research from the University of Portsmouth and the University of Surrey finds that young adults with higher loneliness and anxiety are more prone to problematic smartphone and social‑media use. They often turn to their phones to cope, only to find that compulsive checking and late‑night scrolling make their anxiety worse. AI‑driven recommendation engines sit on top of that vulnerability, optimising for engagement, not well‑being.

Jonathan Haidt, in The Anxious Generation, offers three practices that, uncomfortably, describe many parents’ failures in fighting the attention crisis amplified by AI10 [10]:

Treat the phone as an experience blocker, not just a distraction. It does not only steal minutes; it can steal entire childhood “sensitive periods” for learning social skills and independence.

Scaffold real‑world risk. Children do not just need protection; they need difficult projects, physical challenges and unfamiliar groups that build anti‑fragility.

Fight the algorithm, not the kid. Our children are not weak. They are up against billion‑dollar AI systems tuned to keep them glued to a screen. They do not need more shame; they need allies who understand the game.

The same logic extends into mental health.

On paper, Gen Z is the most connected cohort in history. Yet surveys across countries show rising loneliness and anxiety among teens and young adults. Digital habits are not the only cause, but they have become a powerful amplifier.

AI‑powered companions and “therapist” chatbots plug straight into that vulnerability. Xingye, an AI companion mobile app developed by AI powerhouse MiniMax, has around half a million daily users in China, many of them teen girls and young women11 [11]. Journalist Poppy Koronka reports that children using chatbots from Meta as therapists may see their mental health worsen. US regulators have opened investigations into AI therapy bots over misleading claims and data practices12 [12]. One clinical worry is structural: human therapy is bounded in time and space; sessions end. AI does not have office hours. A child lying awake at 2 a.m. can spend hours ruminating with an endlessly responsive bot with always-on relief, reinforcing anxious loops instead of disrupting them.

It is worth noting that AI can also support healthier habits — for example, by guiding exposure therapy, structuring journalling or offering language practice — when embedded in thoughtful products and bounded routines. But those designs remain the exception.

6. Skills for a human–AI symbiotic balance

Pull these threads together — synthetic content, artificial ignorance, attention slicing, AI‑mediated coping — and one conclusion emerges: skills for a human–AI symbiotic balance sit at the centre of a new parenting playbook. We are not just managing devices; we are shaping the relationship between our children’s minds and an always‑on layer of machine intelligence.

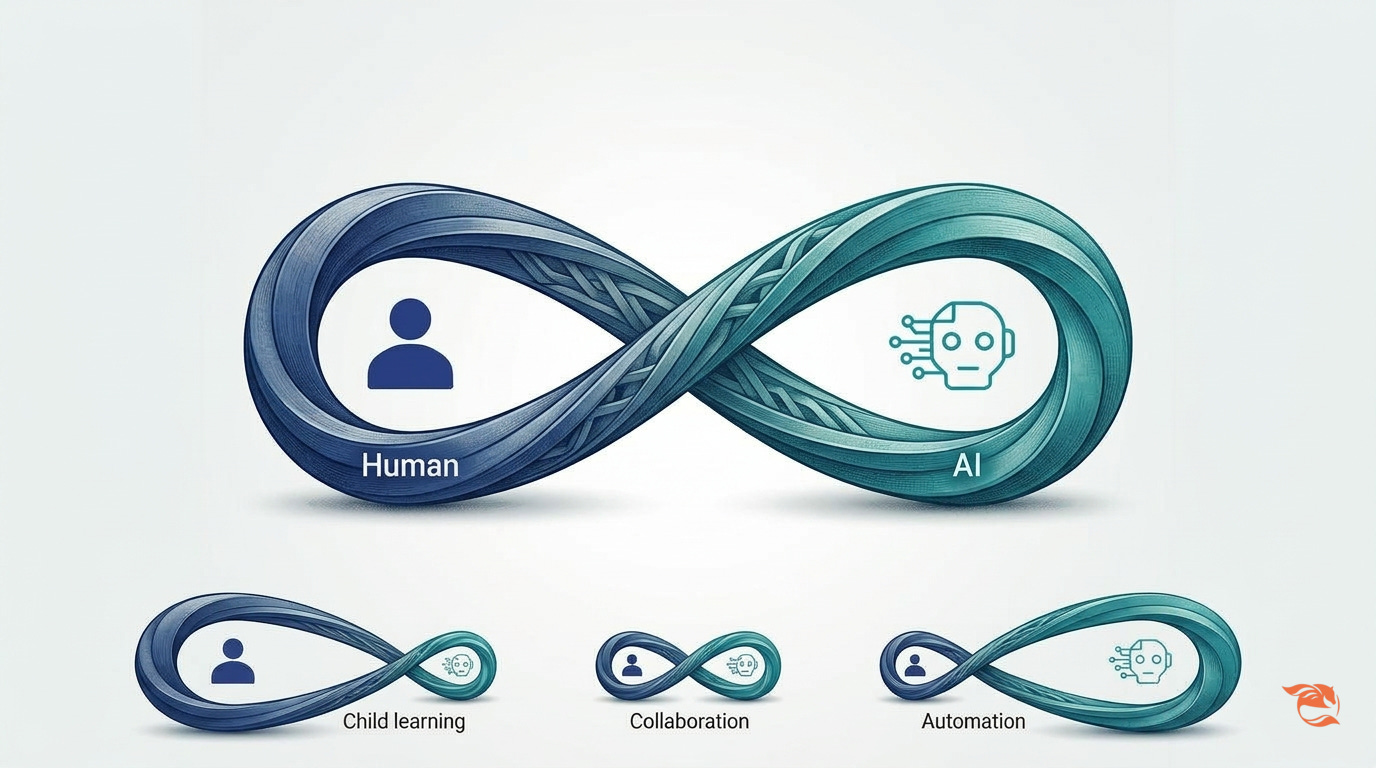

Human–AI co‑intelligence is less a tug‑of‑war and more a sideways infinity loop: one side human, one side machine. At different ages and in different tasks, one loop should swell while the other shrinks — sometimes the child leads and the AI merely suggests; other times the AI drafts and the human edits. The balance is not automatic; it needs deliberate, ongoing calibration.

Three skills matter most.

1. Asking good questions in the right context.

This is the antidote to both hallucination and shallow thinking. It forces us to slow down, frame problems clearly and engage our own cognition before outsourcing the rest. With teens, that might mean insisting they write their own first paragraph before asking an AI to help; with adults, it might mean defining success criteria before letting an AI agent act.

2. Judgment and discernment.

This is the daily practice of verifying claims, cross‑checking sources, resisting easy answers and being willing to update beliefs in light of evidence. AI will keep getting faster and smarter; the question is whether we, as families and communities, can get wiser at least as quickly — or whether we drift down the comforting glide path into artificial ignorance.

For adults and professionals, these two skills translate into clear guardrails. Humans stay in the loop (AI suggestions remain drafts until a responsible person signs off), AI assists but does not replace (co‑pilot, not pilot), and we schedule regular “AI‑off” sessions (or AI holidays) so people practice key skills without autopilot. In high‑stakes domains, that can mean dual‑pass reading, credentialed access to powerful tools and audits of when humans override or rubber‑stamp AI decisions.

3. Human–AI balance as a parenting habit.

For parents, the balance starts with a different set of questions. With teens, it means deciding together where AI should help and where it should stay out: which homework tasks are AI‑assisted versus AI‑free, which creative projects can use AI as a sparring partner versus a ghostwriter, and how much screen time goes to auto‑playing feeds versus deliberate research. You are not banning tools; you are co‑designing the loop.

I find it useful to picture the human–AI symbiotic partnership as an infinity symbol: one loop for the human, one for the AI. For any given task, age or situation, the loops should be different sizes — sometimes the human side dominates and AI only nudges; other times AI handles more routine work while the human decides what matters. But the human loop never disappears; keeping the human in the loop (HITL) is critical. The exact calibration of human and AI roles depends on two skills: asking high‑quality questions with enough context, and exercising judgment and discernment about when to trust, challenge or ignore what the machine suggests.

For younger children, parents can borrow Clayton Christensen’s “Jobs to Be Done” (JTBD) lens13 [13]. Stop asking “Why is my kid using this?” and start asking “What job are they hiring this for?” If a child is using AI “for homework”, is the real job avoiding boredom, chasing quick praise or actually learning the material? Do not fight the tool in the abstract. Ask what job your child is hiring it to do — and whether AI is truly doing that job well for their long‑term growth, or quietly doing the opposite.

Three concrete experiments can make this real in a single month:

Choose one family activity — a project, trip or meal — that is planned and executed with no AI at all, simply to feel what attention without autopilot is like.

Have one explicit JTBD conversation with your child about an app or AI tool they love: what job it is doing for them, and whether it is doing that job well.

Set one clear boundary on AI use for schoolwork (for example, “AI may critique your draft but not write it”) and stick to it.

In the end, ambient intelligence will seep into every corner of our children’s lives. The open question is not whether they will grow up with powerful AI, but whether they will grow up with the inner skills to decide, moment by moment, when to lean on the machine — and when to leave their own minds fully in charge.

Fellow parents, let’s help one another and our children step confidently into the age of artificial intelligence, without sleepwalking into artificial ignorance.

References:

S. Brinker, “Martec’s Law: Technology changes exponentially, organizations change logarithmically,” chiefmartec.com (blog), Jun. 12, 2013. [Online]. Available: https://chiefmartec.com/2013/06/martecs-law-technology-changes-exponentially-organizations-change-logarithmically/

Europol Innovation Lab, Facing Reality? Law Enforcement and the Challenge of Deepfakes, The Hague, The Netherlands: Europol, 2022. [Online]. Available: https://www.europol.europa.eu/cms/sites/default/files/documents/Europol_Innovation_Lab_Facing_Reality_Law_Enforcement_And_The_Challenge_Of_Deepfakes.pdf

E. Corsi, N. Marchal, U. Gadiraju, and N. Giansiracusa, “The spread of synthetic media on X,” Harvard Kennedy School Misinformation Review, vol. 5, no. 2, Jun. 2024. [Online]. Available: https://misinforeview.hks.harvard.edu/article/the-spread-of-synthetic-media-on-x/

Federation of American Scientists, Strengthening Information Integrity with Provenance for AI‑Generated Text. Washington, DC, USA: Federation of American Scientists, 2025. [Online]. Available: https://fas.org/publication/strengthening-information-integrity-provenance/

Anthropic, “Introducing Anthropic Interviewer,” Anthropic, Nov. 18, 2025. Accessed: Mar. 21, 2026. [Online]. Available: https://www.anthropic.com/research/anthropic-interviewer

N. Kosmyna, et al., “Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing,” arXiv preprint arXiv:2506.08872, Jun. 2025. [Online]. Available: https://arxiv.org/abs/2506.08872

Dell’Acqua, F., et al. “Navigating the Jagged Technological Frontier: Field Experimental Evidence of the Effects of AI on Knowledge Worker Productivity and Quality,” Harvard Business School, et al. Sept. 2023. Available: https://d3.harvard.edu/navigating-the-jagged-technological-frontier/

J. Misiewicz et al., “Endoscopist deskilling risk after exposure to artificial intelligence in colonoscopy: A multicentre observational study,” The Lancet Gastroenterology & Hepatology, vol. 9, no. 4, pp. 321–329, 2025. [Online]. Available: https://pubmed.ncbi.nlm.nih.gov/40816301/

A. L. Duckworth, “Push Those Cellphones Away,” Bates College Commencement Address, Lewiston, ME, USA, May 25, 2025. [Online]. Available:

J. Haidt, The Anxious Generation: How the Great Rewiring of Childhood Is Causing an Epidemic of Mental Illness. New York, NY, USA: Penguin Press, 2024.

. Afreen, “AI boyfriends gain popularity in China as young women turn to virtual romance,” The News International, Feb. 26, 2026. [Online]. Available: https://www.thenews.com.pk/latest/1393883-ai-boyfriends-gain-popularity-in-china-as-young-women-turn-to-virtual-romance

P. Koronka, “‘Therapist’ chatbots pose danger to children, counsellors warn,” The Times, London, U.K., Aug. 24, 2025.

C. M. Christensen, J. Allworth, and K. Dillon, How Will You Measure Your Life? New York, NY, USA: HarperCollins, 2012, ch. 8, “The Schools of Experience.”

Very good article: I share your point of view, even if I'm building AI tools for Education @NOLEJ.

We do our best to develop solutions where humans and AI collaborate.

I read a study in a research paper aligned with your article (here is the extract):

3.2 The Risk of Dependency: The "Crutch Effect"

The efficacy of AI is not without caveats. A critical study involving nearly 1,000 Turkish high school students revealed a "Crutch Effect." Students with access to GPT-4 during practice sessions improved their math performance by 48% to 127%. However, when the AI tool was removed for the final exam, these students performed 17% worse than the control group who had studied without AI.

This suggests that without careful pedagogical design, students may use the AI to bypass the cognitive struggle necessary for deep encoding. They learn to "operate the AI" rather than "solve the problem." This validates the need for fading scaffolding—the AI must gradually withdraw support to ensure the student retains the capability independently.

I share your sentiments. I have also been contemplating the complexities surrounding AI, particularly its potential risks and significant impact on education. In this contemporary AI era, I find myself drawing an analogy:

* It's akin to expecting all workers or recent graduates to immediately become Olympic coaches, without any roles for Olympic players or trainees who are in the process of accumulating years of experience.

* Furthermore, it seems as though everyone is now expected to be a polymath, reminiscent of the pre-Industrial Revolution era. We are seemingly required to master philosophy, sociology, art, psychology, business finance, domain design, mathematics for AI, computing, system infrastructure, quality management, security compliance, and strategy. I acknowledge this may sound a bit ambitious.